*

Infrequent & Divergent Thoughts & Images

Filming Leaders in Collier County Schools

*Stream of Conscience Regarding Pre-Gesture, Erasure, and Silence- Part 1

*This post was largely drafted via dictation to Siri (iPhone 5) en route to work.

Francis Bacon, a largely self-taught artist, said that the first mark of the canvas was the most powerful.

Bacon had an artistic vision that was informed by the various media of his time. So, movies like Battleship Potemkin and images like the pope frequently easily entered the frame of his canvas. Bacon was painting not things or portraits but a visual schema of his psyche, one littered and entangled with images both representational and symbolic.

What of impulse before the Gestural scraping of paint on the canvas for that first “indelible mark”?

What I’m asking is what happens to the paint in the heart and the mind prior to the first mark on the unprimed canvas? Ever prolific, this too was recorded for Bacon. He would actually test color and test the way his brush hit a surface on the walls in his studio.

What of all of this practice? Is it meaningful? Is it something to be recorded? Is it more than practice?

It felt like scientific study. A question is asked, a hypothesis is pronounced or declared, and off goes the scientist to decide if the process or the outcome is something worth investigating further. It always felt like this for me. I would grab my alto saxophone or my trumpet and I would play completely alone in a room or a racquetball court somewhere in town. Almost everything I played was recorded on a cassette. I suppose I recorded out of habit or out of necessity to hear what those sounds not only sounded like while I play them but once I was out of that moment.

The sound invariably changed once I left the moment of actual creation. My ear would pick up things that I just liked in retrospect, but it felt like something worthwhile. The process became the art, the meaningful pursuit for some communication or the meaningful pursuit of a way to communicate with sound. The communication may have been only shared with myself at the time but had some significance in the way I processed information in a variety of other subjects.

This morning I’m listening to David Gross. His album, Things I’ve Found to be True, captures this gesture before the published gesture I guess. However, none of this feels like simple practice. If feels very much like David Gross is scraping into the messy areas of creativity to find something just beyond his reach.

Do the quiet scrapings that move through the sound space of Gross’ record originate from the same space as the automatism-based drawings of Robert Motherwell or Matta (his teacher)?

Does this experiment in silence or in near silence have any root in the foundation of the movement known as “New London Silence” with which cellist, Mark Wastell, and harpist, Rhodri Davies, are known?

Heddy Boubaker’s Lack of Conversation does the same, albeit dramatically different in process. Boubaker may focus more on filled space then Gross, but both have an intense need to do things with the saxophone that may not have been heard before or prior to their respective recordings. It’s not so much about the recording of something that’s never been done as much as it’s about recording something that you don’t know what it’s going sound like prior to playing. It becomes an act of freedom and also bravery.

Something about this area artistic creativity reminds me of Paul Auster’s White Spaces a prose (almost a prose poem, and it is included in his collected poems) that captures a sense of emptiness (or, rather, openness) that very few artworks capture. There is a great deal about this piece here.

In the last few years, several musicians have taken to almost “erasing” their sound to bare minimal marks. Assembling just the brass and woodwind players alone, one could create an orchestra of silence or near silence. These include Nate Wooley, Axel Dorner, Radu Malfatti, Greg Kelley, etc. This list could go on for days if one were to collect the names of musicians that are often working on the outside of normally accepted musical sound, like Gabriel Paiuk (sound artist from Buenos Aires) and Taku Sugimoto (guitarist from Japan). The list is endless because artists are all searching for that method of communication in a time where probably so much Sal fills our day it’s a stripping away to the essential elements that informant memory or soft around the subject. It may even be the stripping away of the subject itself.

One famous example of this is Robert Rauchenberg’s Erased to de Kooning, a pencil drawing by Willem de Kooning that was erased by Rauchenberg after being given permission by the artist. The image, or what is left of the image, is mounted in a beautiful frame. The “indelible marks” are almost nonexistent; they become pentimenti.

What is it that draws me to this openness? This erasure?

Why am I entranced by Mary Ruefle’s Little White Shadow, a short book, appropriated and erased by Ruefle in the creation of something new? Isn’t this a reworking of Radi Os (Ronald Johnson) or A Humument (Tom Phillips)?

Why do I create work that is also engaged in some secret history of absence or erasure? What do I gain from it?

Why do I create musical works (like the one linked) that attempt to do much the same as these? Or like this one (Track 9)?

There is so much more that could be connected…so much more ether, nothingness, absence, silence.

Maybe I am interested in exploring this creative “first act” or non-act…because I am a teacher. The moment that a student learns…there is a magic that happens in that moment. Some term this a “lights go on” moment. Some call it awareness. I think it has to be the most powerful emotional moment…reaction to awareness of learning. It is something beyond what I could ever hope to describe.

As I write this free thought exploration down, I am thinking about how art isn’t really separate from that moment at all. It is that moment. It must capture something that is not able to be captured, something that is just outside of normal “practice” or just beyond reach. To get there, maybe we have to erase what has come before.

Maybe we have to erase ourselves a bit.

Perhaps all art needs to be destroyed and erased and lost in order to build the simplest of blocks of coherent expression back into existence. Maybe, it’s a building back to relevance.

Influences (Listening, Watching, & Reading)

*I first learned of John Cage’s work when I attended high school. I can’t remember if it was art class or something else. But, I was tipped off somehow. My love of his ideas at a young age even involved me stealing a plastic record out of a library book just to hear the piece. It had never been played, although the book was probably 20 years old at the time. It really does prove the point that if you want to do something subversive in society (artistically), hide it in a book.

John Cage (Official) has always been an important influence on my thinking in regard to making things, whether they be written or drawn or played. Of the many recordings available, the most treasured ones include his own voice. Those really captured something for me. Since I first heard Indeterminacy (with sounds performed by David Tudor), I was hooked. In fact, Variations was the next stop…but, only for a short one…then, back to the “readings” he’d recorded. I had moments addicted to his visual work (example 1 and example 2). But, that didn’t last long.

The most important recording was an 8-CD set published by Wergo a few years ago. It came with a hefty price of just over $100.

However, you can now hear this work in its entirety (Thanks to Open Culture)-

Listen to Cage’s Diary for free now.

While you are at it, download the “prepared piano” app.

This is 40: First Master’s Level Race

*Pillars (Clarinet)

*Poem fragment 7-29-14

*Leave these serrated veins

inside my legs

when rain wishes to be me &

I wish to be rain.

Q Methodology: A Brief Background and Sample Pilot Study with School Principals (Student Paper Draft, 2007)

*No copy-editing has occured to provide this post some clarity. APA is almost ignored. But, the general curiosity remains. I have always been interested in identity and how we define ourselves throughout our lives and careers. The following was a brief paper and pilot “study” completed with a group of principals in 2006.

Very Brief Background

Q-factor analysis originated soon after Charles Spearman invented factor analysis at the start of the twentieth-century. Factor analysis, according to Steven R. Brown (1980), has been historically “used as a procedure for studying traits”. In this role, factor analysis has been popularized by social and political science. However, Brown explains that factor analysis can be used to factor persons, thereby creating what William Stephenson (1953) terms “person-prototypes”. This, Brown asserts, would require a separate methodology. This methodology, entitled Q, is described by Hair (1998) as “a method of combining or condensing large numbers of people into distinctly different groups within a larger population.”

Although G.H. Thompson was the first researcher to work with Q-factor analysis, he was not positive about its future (Brown, 1980). He believed that it had serious deficiencies, which I will discuss further in a moment. However, one researcher named William Stephenson was more excited about the possibilities of Q. Since its discovery, Q-factor analysis has been used widely in the social and behavioral sciences.

The main structural difference between Q and R analysis is summed up by Raymond Cattell’s description of the “data box” (1988). Cattell names three main components of a factor analysis: persons or cases, items, and occasions. He said that how we organize these components would structurally change the procedure. For example, in R-factor analysis the items signify columns on a matrix, while the persons completing the items represent rows. In this picture, one can see that the items would be grouped to create less factors, thereby creating types of items. Inversely, in Q-factor analysis, one can place the persons in the columns and the items in the rows. This process would create person-prototypes as previously mentioned.

The person-prototype idea is one that has revolutionized the social sciences. Researchers are able to make a case for a certain person type linked to various areas of behavioral disorders. One such study, conducted by Porcerelli, Cogan and Hibbard (2004), was created to better understand what personality traits men possessed who were violent towards their partners. The Q-sort was very large, 200 items long, and was completed by several psychologists and social workers very familiar with the many cases of domestic abuse. The end result supported the notion that these men were “antisocial and emotionally dysregulated.” Thus, it may be argued that these violent men have some things in common that make them stand out from others, person-prototypes.

Q-methodology has been employed by other fields of inquiry recently, as well. In Woosley, Hyman and Graunke’s work with student affairs problems on college campuses using a population of only three, the researchers wanted to explore whether Q would be a promising evaluation tool for the student experience (2004). They found, when asking these participants to sort ideas concerning their jobs on campus, that the students were excited about the process. During a post-sort interview, they all expressed enthusiasm for the activity and the results.

Controversy

Even with positive stories of Q like these, there are a few reasons why some researchers refuse to use this methodology or see any potential for its use. For example, one could easily discern from the discussion of the data box that a researcher could just take a set of data gathered for an R-factor analysis and apply it to the Q-structure, thereby completing another full analysis of the same information. Cyril Burt championed this form of usage in the Thirties (Stephenson, 1953). This is one point of contention for Stephenson. Stephenson explained that the procedure for collecting the data was part of the methodology. He stated that the Q-sort, the activity of participants physically sorting items in a prescribed pattern under certain conditions, was part of the overall methodology. One could not collect the data for the specific purpose of running an R-factor analysis and simply rearrange the data in a way appropriate for Q-analysis.

Many researchers disregard Q-factor analysis due to its lack of generalizability. They may claim that such a small sample could never be applied to a much larger population. In this respect, they may be correct. A Q-analysis is meant to really be something like a case study. It may be applied in some fashion to another situation, but the data collection is of a moment in time, or an occasion.

One of the main reservations I have with the Q-methodology is the focus on researcher-designated language. The language or items that are selected for the sort are done so by the researcher, not the participants. Thus, there may be some error in communication.

Sample Q-Sort Methodology

The particular focus of my sample Q-sort was a group of principals that are currently participating in the North East Florida Educational Consortium Principal Leadership Academy (PLA). The academy is only a year old, and the current version is a pilot run of the program that has been designed for principals who are undergoing some preliminary training to facilitate a school-wide action research project. The academy is comprised of twenty-four participants, principals with little experience or early-career principals to principals with a great deal of experience or seated principals. Because the leadership experience was quite varied among the group members, my hypothesis was these principals could be arranged in groups by experience and/or leadership style.

The items I decided to use in the Q-sort were the behaviors that the state recommended to the districts might be associated with the ten newly-adopted Florida Principal Leadership Standards (April, 2005). Of course, these behaviors were all optimal based on the standards. Thus, if a sort was using these written behaviors, there would be no “wrong” answers. This was important in establishing trust amongst the participants and me. This was no competition or evaluation of how they relate to and sort these behaviors. If they were aware at the outset, that there was no “correct” way to sort these items and there was no evaluative component to the sort, they may be more honest in the sorting process.

Another possible dimension that could be added to the sort that would possibly yield richer results would be the grouping of leadership behaviors into two categories, transactional leadership behaviors and transformational leadership behaviors. Due to the fact that these behaviors were never verified to actually represent either form of leadership, the Q-sort would have to be labeled an unstructured sort. (Appendix A).

After deciding which behaviors I would use as my items (16 sentence strips), I turned my attention to the actual Q-sort process.

Consulting Fred Kerlinger’s Foundations of Behavioral Research (1973), I was able to formulate a methodological plan. Kerlinger clearly maps out the process of setting up a practice Q-sort activity, or what he calls a “miniature Q-sort”. He writes that the participants may only sort a few items, as little as ten. This would not be optimal, he goes on to explain. Kerlinger insists the more items that one has available for the participants to sort, the better the results. Another piece of useful information was the discussion of the sort design. Kerlinger describes the physical act of sorting the items. He sets up a wonderful method of manipulating the items into a quasi-normal distribution, a Likert-type scale (with seven points) where the participant may choose whether the item is most like them or least like them. The participants are limited with the amount of items they can place at a given point. With this method of distribution, the sort resembles a normal curve. I used this example to help plan the sort activity with the principals in the PLA.

With the example below, the top line is the number of items that may be placed at each point on the Likert-type scale, and the bottom line is the scale itself. In this example, 7= items most like me, and 1= items least like me.

1 2 3 4 3 2 1

_________________

7 6 5 4 3 2 1

The sixteen items could be sorted in this quasi-distribution very easily by the participants. Each behavior strip contained a number, so that the participants could easily record the placement on the data sheet provided (Appendix B). I created ten identical envelopes containing the sixteen principal behavior strips. Then, I created a large poster displaying the procedures of the sort and the limitations for each placement. I would let the principals sort the behaviors after an already scheduled PLA meeting. They would be separated, mostly for the purpose of providing space for each participant.

On November 2, 2005, the participants completed the sort and carefully filled out the corresponding data sheet as I monitored. The purpose for monitoring was the successful completion of the stated procedures. This was explained to the participants. Once I collected all of the data, I began entering into SPSS 13 to start the analysis. The SPSS software is really set up with R factor analysis in mind, the columns are used for mostly organizing items (refer back to the discussion of Cattell’s idea of the Data Box). However, it is important to note that one may enter the participants in the columns as nominal data. Then, one could easily enter the numbers of the sorted behaviors as the rows. Then, the factor analysis procedure is the same from this point on.

Analysis and Interpretation

In this discussion of the results of this particular practice Q-analysis, I will be addressing the interpretation of the results yielded in Q-analysis in general. I will also be referring to Figures 1-7, yielded by the SPSS software during this practice analysis.

Figure 1 displays the correlations between the individuals based on how they sorted the leadership behaviors. We started with ten factors (individuals), and we are given ten separate factors in this table. This matrix enables the researcher to make some general statements about how each participant correlated with another. Remember, a 1.0 is a perfect correlation, so those are usually the person correlated with themselves. If one consults Hair’s opinion on the cut-off point for correlations, the cut-off for looking at correlations is anything under .450. This makes sense, because the researcher is really looking for correlations that are nearer to 1.0, as stated above. For example, one can see that there exists a strong correlation between LF and LB (.625). Thus, we could state that they may have sorted somewhat similarly. Inversely, M is not correlated to LB very well at all (.050), leaving us to assume that these two individuals may have sorted the behaviors very differently. However, this is all that we can ascertain at this point.

Table 1.

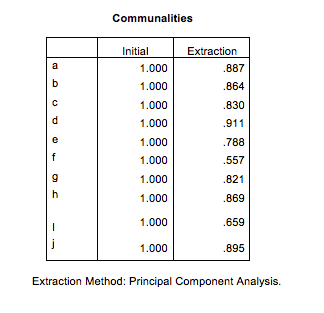

In Figure 2, the researcher is focused on communalities, or how much of the original participant/factor was extracted/recreated in the analysis. The glaring observation that should be seen at the fore is N (.557) shows the least in common with the group as a whole. At this point, it would help the reader to know that N was the only non-principal participant in the sort. I offered her the chance to take part in the sort to have an even number of ten participants in the activity. N has never worked in an educational administration position.

Table 2.

Extraction Method: Principal Component Analysis.

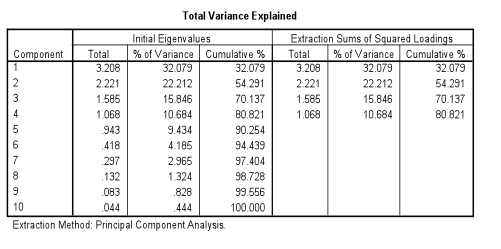

The next step in the analysis is to look at the Eigenvalues and percent of variance that may be explained by the factor analysis (Table 3). When the data was entered, I wanted to isolate the factors that had Eigenvalues of 1.0 or greater. This would present four factors or, in the case of the SPSS output, components. These factors/components are the “person proto-types” discussed earlier. With this table, one can discern that almost 81% of the variance is explained in the analysis. This is very positive for two reasons: first, I now can see that four factors or person proto-types was a good number to represent most of the variance and, secondly, the low number of four factors is a good reduction from the original ten.

Table 3.

A scree plot of the factors will confirm that four factors/components is a good representation of the whole. To read the scree plot in Figure 1, one must look for the area at which the downward motion of the line comes to a plateau or a leveling off. It is apparent to me that the original interpretation of the number of factors was a wise decision. The plateau of the scree appears after the fourth factor. One may argue that this point actually does not level off as much as it jets upward slightly. However, being aware that this point represents the odd-man out, N (the participant with no experience in an educational leadership role), I believe that four factors truly does represent the whole in the best way. After reviewing this output originally, I ran the analysis again isolating only three factors. However, there ended up being a few of the original participants/factors left out of the whole. Thus, I opted for the four-factor analysis model.

Figure 1.

In the next step of the analysis, the researcher begins to examine the extent to which each original factor is represented by the four composite factors or proto-types. The first matrix (Table 4) shows the extent to which each of the original components is represented by the four factors created before the rotation and the variance is distributed more evenly amongst the factors. In other words, we can see which of the person-prototypes each individual fits in the best. For example, M is definitely more associated with the first extracted factor. With this matrix, we can only begin to see how the participants might relate to the person proto-types created. To gain a clearer picture of the relationship between the participants and the composite factors, one needs to consult the rotated component matrix.

Table 4.

In Table 5, the output from the rotation (using the varimax criterion) is more accurate in describing how the well the components is represented by each of the factors. With the background knowledge of all the participants, I could easily see justification for each of the participant’s placement in the matrix. I set the analysis in SPSS to create an output that would arrange according to size. Thus, looking at the matrix, the researcher can see the participants that share the most in common grouped together. In the first column, the first three participants are strongly correlated at .917, .868, and .659 respectively. It is interesting to note that these three principals represented by the first factor are the three most experienced of the participants. Additionally, these three administrators started a statewide reading reform together, meeting monthly for the last five years to discuss and share ideas with reference to the reform.

Table 5.

In the next factor, F (-.915) and K (.904) are represented. F, it appears, is very negatively correlated to K. K has been a principal for one year and has worked as an assistant principal to one of the participants represented in the first factor. F was the principal of a failing school last year, and now is a new principal at a K-22 special needs school. They actually appear to have sorted the behavior strips almost the exact opposite of each other.

The third factor has LB (.928), LF (.716), and N (.714) correlated to each other. LB and LF are both first year principals. N is the non-principal among the group as stated previously. It makes sense to me that they may have sorted the behaviors similarly.

The final factor includes BA (.790) and R (.560). R does not appear to correlate highly with any of the four factors. This grouping is the only one that seems to contain two individuals that have very little in common in their backgrounds. When I forced the analysis to create only three factors/components, BA was left out of the final grouping of factors.

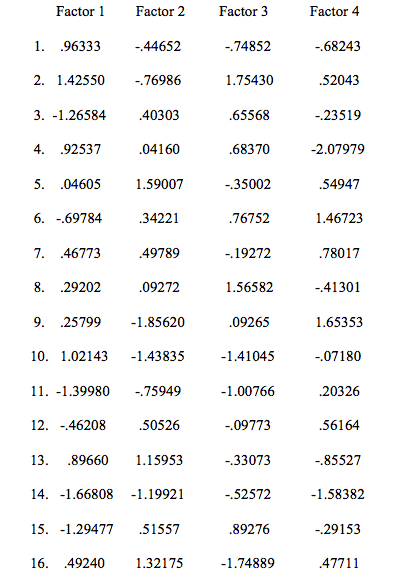

Before leaving the analysis, it is important to speak to one last piece, the variables/behavior strips and their value to each of the factors (Table 6). These values are in the form of Z-scores, making it easier to see how each person- prototype sorted each behavior (interpreted by columns) and how each behavior strip was comparatively sorted in each person- prototype (interpreting by rows). Daniel (1990) explains that these values or standardized regression factor scores are “utilized to determine which items contributed to the emergence of each of the person factors.” Remembering that the first eight strips were designated as transformational leadership behaviors and the second eight were designated as transactional leadership behaviors, one can now find some patterns in how the prototypes sorted. It could be argued that the first group organized the transformational leadership behaviors as more like them than the transactional leadership behaviors. For example, behavior strips # 1, 2, and 4 all score very highly in the matrix for the first group. In contrast, the third group scored behavior #1 negatively, less like them. However, the third group also scored transformational behavior strips # 2 and 4 highly.

Table 6.

Taking into account that the scores did not really follow a trend that established any of the groups as definitively transformational or transactional, it is probably safe to say that the participants were grouped according to another criterion. We can say that the participants were grouped with others who sorted a set of behaviors similarly on that day at that time.

There are two facets of the Q-sort and analysis that I would change if I were to conduct a similar study in the future. To begin, it would be more structurally sound to use many more behaviors in the sort. The added information that they could rate may yield different results in the analysis. Additionally, I would not group the Florida Principal Leadership Behaviors into the two leadership styles, transformational and transactional. This created an unstructured sort, or one based on items that were not used previously in this manner. There has been no research linking these particular items with the labels transformational and transactional.

This practice Q-sort and analysis is narrow in scope. Judgments concerning the principals’ sorts are not applicable. The purpose of this study was to simply find out if the principals could be placed into groups or factors that seemed to make sense. Knowing the backgrounds of the participants allowed me a different lens at which to look at the analysis that many researchers may not get when conducting a Q-sort. It allowed me to understand why I think the participants grouped the way they did.

Citations

Brown, S. R. (n.d.) The history and principals of q social sciences methodology in psychology and the social sciences. Retrieved Nov 2, 2005, from http://facstaff.uww.edu/cottlec/QArchive/B.

Brown, S.R. (1980). Political subjectivity: applications of q methodology in political science. London: Yale University Press.

Daniel, L. G. (1990). Operationalization of a frame of reference for studying

organizational culture in middle schools (Doctoral dissertation, University of New Orleans, 1989). Dissertation Abstracts International, 50, 2320A.

(UMI No. 9002883)

Hair, J., Tatham, R., Anderson, R., & Black, W. (1998). Multivariate data analysis. 5th ed. New York: Prentice Hall.

Kerlinger, F. (1973). Foundations of behavioral research. 2nd ed. New York: Holt, Rinehart and Winston, Inc.

Nesselroade, J., & Cattell, R. (1988). Handbook of multivariate experimental psychology. 2nd ed. New York: Plenum Press.

Porcerelli, J. H., Cogan, R., & Hibbard,S. (2004). Personality characteristics of partner violent men: a q-sort approach. Journal of Personality Disorders, 18(2), pg. 151-162.

Stephenson, W. (1953). The study of behavior. 2nd ed. Chicago: The University of Chicago Press.

Woosley, S. A., Hyman, R. E., & Graunke S. S. (2004). Q sort and student affairs: a viable partnership?. Journal of College Student Development, 45(2), p.231-242.

Always Asking Questions & Always Learning

*Working on the University of Florida’s College of Education Online M.Ed. in Educational Leadership has provided me a golden opportunity to learn more about Florida’s educational leaders. The last few years of my career have led me into very divergent, but exceptional, learning opportunities. From leading the development of curriculum for online courses to setting up methods for large-scale registration and submissions for district-based inquiry, I have not been able to rest much on what I have learned previously in my career. I am constantly in challenging (but insanely exciting) situations.

With the Online M.Ed., I have been given the chance to search out and interview principals at all levels of career experience to be included in the courses. I believe this “real-world” perspective from leaders in widely varying school contexts provides the students with an extraordinary unique advantage. It has provided me something extraordinary as well. Next to finishing my dissertation and teaching my elementary and high school students, learning from these wonderful leaders has been the best part of my career in education.

The leaders pictured include (L-R): Hudson Thomas of Pompano Beach High School (Broward County), Roxana Herrera of Palm Springs Elementary School in Hialeah (Miami-Dade County), Dr. Joseph Joyner- Superintendent of St. Johns County Public Schools, Lynette Shott of Flagler-Palm Coast High School (Flagler County), Scott Schneider of Terry Parker High School (Duval County), and Lawson Brown of Charles Duval Elementary School (Alachua County). These are only a few of the leaders we have interviewed.

The cover stars of the flier below are two exceptional leaders: Christy Gabbard and Stella Arduser of P. K. Yonge Developmental Research School at the University of Florida. They are also featured on our website now (https://education.ufl.edu/edleadership-med/).

Dean Young- The Art of Recklessness

*“The Truth is Marching In”

*What will be your legacy?

*What will be your legacy?

In 2003 (as I remember), I was asked this question. The question followed the most concise history of time I had ever seen: a timeline drawn in chalk across three of the four walls of a classroom by a professor as he spoke to his class. He speculatively marked the beginning of all time and walked through major historical events, such as the birth of the planet and the end of the dinosaurs, all the while contemplating on the significance of time. Right before his line ended, near the very end of the third wall of blackboards and after just beginning to chart the dawn of the human species (literally just a few inches of wall contained everything from the first humans to the Crusades to World War Two), he stopped briefly to ask someone their birthdate. He marked it on the line. His hand moved probably less than a millimeter. He marked their death. Then, the last remains of the chalkboard and his continued walk across the fourth wall of the room was accompanied by just this description- Time will continue, the endless march of time. You were here so briefly. What was important to you? What was changed for the better by you? What did you do with your brief existence? Anything? What will be your legacy?

Like any talk meant to inspire, this question could have been forgotten. I have heard it hundreds of ways. What made this different was the individual asking it. He wasn’t trying to become the next self-help guru or to land his own TED talk. He was simply trying to set the stage for and facilitate future lessons to align to the differentiated goals for students in his course (on the lifelong professional development of our own abilities in leadership). Students could, upon reflecting on this question, begin to connect the learning experiences to something personally held to be important.

Things appear to be divergent. To an outsider, every action or word uttered may appear to be completely reactionary or disparate events in a life. However, once one knows something about their potential legacy…once someone really understands what drives them, all decisions…every action is fueled with more responsibility to the vision one had set for themselves and the world around them.

I believe this idea of legacy works for all people, organizations, etc. It is what helps to provide one with a personal “brand”.

I have been asking this question of the educational leaders I’ve interviewed over the last couple of months. I ask them, at the conclusion of course-based questions: What do you want your legacy for education/students/teaching/etc. to be? Why? What is (fill in name of school or district or group of people) like after you are gone? What are you doing to affect that change now?

The answers are all different. Every individual has a unique vision of their legacy, whether they want to change the educational landscape for struggling students to those that want to change the opportunities for potential careers in their district from access to technology-rich programs to those that just want to be forgotten.

As I just write these brief, unedited thoughts in the middle of the night, I am thinking about my personal goals, my mission, my potential legacy. I wonder if those with whom I work have ever considered these things. I wonder if our team has a collective mission…or if there is a thought to what our team legacy will be. What is our brand and why?

Photo- shot from Verrazano–Narrows Bridge, on my way to running the New York Marathon again in 2011.

Chapel

*

Remains

*

Remains

*Baby Shower (Detail)

*Beautiful Book

*

Beautiful book found in the old PK Library.

The Trees The Trees

*Remembering Creativity and Imagination- Two Examples

*1) Creativity and Imagination as Learning Tool

It is not an understatement to claim that my students taught me a great deal about almost every facet of my life, including having an open mind to the creative impulse that would be incorporated in my own work. After teaching all day, I would somehow find myself behind a canvas, a piano, almost anywhere that I could “make” something. This morning, I remembered one student who (no matter the assignment) would consistently impress me over and over again. Her ability to access her imagination in such creative ways really inspired me. An example of her work (at fifteen years of age) can be seen below. This was a survival manual created while reading Lord of the Flies. Every page has been created through the use of cut paper. Again, this is one small example from a student that created many works of art.

2) Creative Reflection for School Improvement

As a developer of professional development for principals, teachers, and school-based leadership teams, I was given the opportunity to work with many leaders, both formal and informal. One of the most reflective of these inspirational leaders was Denee Hurst of Dixie County Public Schools. Principal Hurst’s principal leadership academy portfolio (in its organization and its sheer breadth of documentation of reflective leadership) is one of the best examples of a leader’s thoughts and projections of where she intended the school to go academically and culturally. Hurst included not only artifacts that support her intention of raising student achievement (e.g., classroom walkthroughs, emails, professional development, aggregated data and projections, etc.), but provided additional cultural ephemera such as photographs of staff PD and school activities. Principal Hurst has been an advocate of teacher and principal inquiry and participated and taught during several inquiry showcases facilitated by the UF College of Education Center for School Improvement (led by Dr. Nancy Dana). One of the best examples of a leader who constantly seeks engagement and improvement of the world around her can be found in Denee Hurst.

Craft for baby room

*

Above

“This feels like a run-down 3rd grade classroom.” -Anonymous

Norman Hall courtyard, Spring 2014, UF

Further up the street. Angel crossing II. @annamaria0717 @kayllateal @kendylxx

Angel crossing. @annamaria0717 @kendylxx @kayllateal